Anthropic Brings AI Agents to Financial Services

Anthropic has just released ten ready-to-run AI agent templates purpose-built for financial services.

https://www.anthropic.com/news/finance-agents

Delivered as plugins for Claude Cowork and Claude Code, these agents target the most labour-intensive workflows in the industry:

client meeting preparation

market research

financial model construction

month-end close

statement auditing

and more.

Each template ships with its own skills, connectors, and subagents — a reference architecture that firms can adapt to their own risk policies and approval flows.

The promise is striking: work that previously took months can now be completed in days.

What makes this moment particularly striking is its timing. Less than a month ago, Anthropic's Mythos model preview sent ripples of concern through the global IT security community.

Now, that same forward momentum is arriving at the doorstep of financial services.

What’s next, another industry vertical? What will be the consequence of these standardizations and AI proliferation?

Anthropic has just released ten ready-to-run AI agent templates purpose-built for financial services.

https://www.anthropic.com/news/finance-agents

Delivered as plugins for Claude Cowork and Claude Code, these agents target the most labour-intensive workflows in the industry:

client meeting preparation

market research

financial model construction

month-end close

statement auditing

and more.

Each template ships with its own skills, connectors, and subagents — a reference architecture that firms can adapt to their own risk policies and approval flows. The promise is striking: work that previously took months can now be completed in days.

What makes this moment particularly striking is its timing. Less than a month ago, Anthropic's Mythos model preview sent ripples of concern throughout the global IT security community. Now, that same forward momentum is arriving at the doorstep of financial services.

A Platform Play, Not Just a Product Launch

It would be a mistake to view this as a standalone release. Anthropic is executing a deliberate vertical expansion strategy — rolling out targeted agent frameworks across Software Engineering, Financial Services, Legal, and Logistics, each one built on the same robust foundation of Claude Code, Claude Cowork, and an expanding MCP connector ecosystem.

Every such release carries structural implications for the software and digital tools that currently serve those industries. Business process logic, industry compliance standards, real-time data exchange, and decision-making workflows are all in scope. These are not incremental improvements — they are architectural challenges to the status quo.

The Google Parallel

This trajectory is reminiscent of Google's evolution on the internet. It began as one search engine among several — alongside AltaVista and Yahoo — before methodically expanding into mail, maps, photos, mobile, commerce, and travel. Today, Google is embedded in virtually every layer of daily life. The question worth asking is whether Anthropic is charting the same course: starting with developer tools and now moving industry by industry, gradually becoming the operational backbone of how knowledge work gets done.

The Standardisation Paradox

There is a subtler consequence to this shift that deserves attention. Standardisation, by definition, erodes differentiation. When accounting firms, analyst teams, and financial institutions all operate from the same agent templates, their workflows converge — and with them, potentially their outputs. The competitive edge that once came from proprietary processes or institutional knowledge becomes harder to sustain.

This is not without precedent. The widespread adoption of SAP enterprise software is instructive: it brought enormous efficiency gains across industries, but it also locked companies into shared data architectures and process logic that constrained their capacity for innovation. The same dynamic could unfold here, only at greater speed and scale.

What Comes Next

We are at the beginning of an industry-wide inflection point. Anthropic is clearly building an ecosystem — one that, like Apple's, thrives on depth of integration, proprietary tooling, and network effects. That combination typically commands a premium, both commercially and strategically.

The more open question is what this means for the open-source LLM market. As Anthropic's closed ecosystem deepens its industry footprint, will open-source alternatives carve out a meaningful counter-position — or will the convenience and compliance guarantees of a fully integrated platform prove too compelling for enterprises to resist? That tension will be one of the defining dynamics of the next few years in AI.

Pioneers that deliver services through MCP

In workflow digitalization or automation, the main communication methodology is API calls for data exchange with the agreed format, even internally between the software. To get the outcome with the continuously datafeed and reasoning interactions, MCP (Model Context Protocol) became the new standard. Delivering intelligent AI based services with domain expert knowledge through MCP and charge for them become an interesting business model.

I asked Claude to help me summarize some of the pioneers within this field with their business and pricing model for inspiration.

I mentioned in an earlier blog post about the new trend of "Services As the Software". AI agent driven software delivery is no longer about providing a tool and workflow digitalization, rather helps you do the work and achieve outcome you need.

In workflow digitalization or automation, the main communication methodology is API calls for data exchange with the agreed format, even internally between the software. To get the outcome with the continuously datafeed and reasoning interactions, MCP (Model Context Protocol) became the new standard. Delivering intelligent AI based services with domain expert knowledge through MCP and charge for them become an interesting business model.

I asked Claude to help me summarize some of the pioneers within this field with their business and pricing model for inspiration.

(Disclaimer: AI can make mistakes, for deep dive please doublecheck the answers on relevant sources.)

Business Intelligence Report · April 2026

MCP as a Service —

Frontrunners Across Business Sectors

A strategic overview of pioneer companies delivering Model Context Protocol services outside software engineering — with sector analysis and charging models.

Filter by sector

12 examples shown

Charging model archetypes

Is SAFE getting obsolete when Agentic AI lifts the bottleneck?

Just as SAFE finally established as the new standard for ways of working and planning in IT industry, arrival of Agentic AI is shaking some of the fundaments of its manifesto and assumptions.

The whole SAFE framework was built upon the assumption that bottleneck for software development is upon the developer and engineering resources in planning, collaboration, productivity and availability. To cope with this, SCRUM and SAFE established ceremonies daily scrum, backlog refinements, PI Planning and sprint planning etc. When development cycles are weeks and months, those overhead hours spent seems to be small. But if the Agentic driven development and code generation reduces to minutes and hours, all those overheads and latency in planning and collaboration became contra productive.

A discussion regarding best practices in Claude coding with our CTO yesterday turned somehow into a very interesting discussion about how this AI Agent driven software engineering is changing the SAFE ways of working we have in planning and follow-ups.

We know SAFE manifesto with all its ceremonies and roles are build upon one assumption: that bottleneck of the software engineering is the capacity of our developers, their availability, productivity and possibility to scale.

Now with the agentic AI based coding, for the first time this bottleneck is being lifted. If we have unlimited token and computing resources, conceptually most of products can be built within a week. As producing code, test and validation as well as debugging can be done in minutes and hours with the right design and orchestration, no longer days and months.

Now what this means to SAFE ways of estimation and backlog refinement and breakdown. Does product owner has enough capacity to validate and produce requirements as fast and precise as AI Agent produces outcome? The dramatically reduced lead time in code delivery and test cast as well a shadow over the traditional long planning cycle with the increments and PI. It is getting clear that there is some fundamental missmatch here with the SAFE methodology and the way production works with a hybrid AI agent and human developer model.

The blog post from Steve Jones points out even more gaps and contradictories.

We are living in an age of dramatic changes. It was just 6-7 years ago people started to through away the traditional waterfall methodology for manging projects and initiatives, and dismiss the ITIL processes for operational governance in IT and embrace SAFE as the cure for all problems.

Away with the documentation, away with workflow and clear boundary of roles and responsibilities.

In with self-governance with complete transparency, trust in team collaboration with day-to-day intensive communication;

In with town-hall level of gathering for days long collaboration between teams for estimations and dependency mapping;

In with strict backlog and resource guardiance so that teams determines the development pace from their availability and vocation planning.

And those premises no longer hold in the age of Agentic AI. As Agentic AI tools and technologies evolve quickly month for month, the tide with transformation of ways of working and governance will arrive, this time probably sooner than the entrance of SCRUM last time. SAFE is no longer safe.

The new keyword for governance is: precision and clarity in requirement documentation, guardrail for AI Agents, , token budget planning, validation and acceptance criteria.

Bellow is some summaries Claude provided me when confronting this question and I think they are pretty sensible.The emerging consensus from practitioners is not "throw SAFe away" but rather a significant reorientation:

• Estimation is shifting from effort-based (story points) toward outcome-based metrics and AI-assisted forecasting

• PI Planning is evolving toward intent-setting and dependency mapping, with less focus on capacity allocation

• The human premium moves decisively toward problem definition, outcome validation, stakeholder alignment, and ethical governance of AI outputs

• New hybrid team patterns are forming where humans orchestrate fleets of agents rather than write every line themselves

• Governance and quality gates — the Definition of Done, architecture guardrails, security reviews — become more important, not less, because agents produce volume that humans must still be accountable forReflections over "Service as the new software"

To better understand the fundemental changes we are seeing on the software market (especially SaaS market side) with Agentic AI, I recommend a blog post from Julien Bek

This blog will provide answers for

Why AI-agent native software company that provides end-to-end services for different domains will triumph over the license-selling SaaS tooling firms?

My mental excercise starts today by reading a blog post whose title I captured some week ago.

"Services: the new software"

The author Julien Bek provided us with some critical insight and analysis upon the ongoing changes on the Agentic AI market. First I will quote some of his most insightful and concise summaries from his blog. Later we will look at how this is impacting and changing the very specific IT Service Management and Observability space. What are the latest movement there related to Juliens observations.

1. "Writing code is mostly intelligence. Knowing what to build next is judgement".

As another blog author points out, traditional relationship between human-being and software defines software and application as the tool where we build in intelligence, while we leave the decision and judgement to people.

What we are seeing right now is AI is taking over the intelligence work rapidly in multiple domains, with software engineering at most (over 49% of tool calls are made by agent). Other domains will follow, like legal, finance, accounting and customer service.

2. "A copilot sells the tool. An autopilot sells the work"

As a support the AI tools were put into the hands of IT professional to increase productivity and efficiency, but with AI agents the AI tools are no longer a copilot but an autopliot to accomplish the complete work for customer. It delivers outcome, and this is what a service is!

Many SaaS tools live on a copilot model to help professionals conduct data administration more efficiency, with build-in standard and automation. While with Agentic AI tools custom build or AI-agent native vendor, it delivers the outcome to customer instead of just support. Like accounting software has been a help to do the book keeping, while Agentic AI will deliver the book closing as well.

3. "The higher the intelligence ratio in any field, the sooner autopilots will win."

For different expert domains, the more specialised it is, the most complicated topic is, the greater extend customer will appreciate an autopilot. This is valid for traditional highly specialist areas like Legal, Tax advisory, Insurance brokerage as well as IT managed services. In plain English, the less customer understands the problem, the more likely customer will jump on a AI agent journey as long as the outcome is equivalent.

4. "Today’s judgement will become tomorrow’s intelligence. "

As AI agent improves every day in understanding the context and correcting the historical mistakes, more and more of the judgement part can as well convert into rules and conditions for AI to automate. This is the same journey when computers start to play chess.

For the future convergence between the judgement and intelligence, what areas will be easy to do first? The author Julien Bek gives his forecast.

If a task is outsourced today, it is likely to be on top of list for AI autopilot. Why?

The reason is simple:

1. Customer has accepted the work to be done externally

2. There is clear scope and budget for that

3. The buyer is already purchasing an outcome

"Replacing an outsourcing contract with an AI native service provider is a vendor swap. Replacing headcount is a reorg".

Julien has even provided an opportunity map for the business domains where AI automation has the greatest potentials and quickest gains with autopilot.

Now coming to our domain area of IT Service Management and Observability, what are the current developments for the vendors to take on this co-pilot to autopilot transition?

I asked again Claude to provide me with a summary of the major functionalities as well as availabilities.

My takeaway after summarizing all this info:

• If you want to build a native AI agent driven product that delivers outcome instead of selling as a tool, Julien has pointed out the opportunity

• The convergence of copilot to autopilot means that observability tooling is more and more focused on delivering the most important service outcome - stability and uptime with the help of AI agents and less manual intervention.

• This is what people has been buying IT Service Management tooling and process to achieve (though manually), which means the more monitoring becomes automated and AI driven, the less dependent IT organization will have on the ITSM tool functionality. The close cooperation between ServiceNow and Dynatrace shows ServiceNow is feeling the heat.Agentic AI Functionality for Major Observability Vendors

A summary of Agentic AI functionalities for the major observability vendors by Claude

AI Agent Capabilities by Vendor

Triage automation, root cause analysis, and remediation across the major observability and ITSM platforms — reflecting product releases and announcements through April 2026.

| Vendor | AI agent / product | Triage & root cause analysis | Automation & remediation | Availability |

|---|---|---|---|---|

|

Splunk / Cisco

AgenticOps

|

Troubleshooting Agent Triage Agent ITSI Episode Summarization Event iQ AppDynamics AI | Automatically correlates MELT signals; surfaces ranked probable causes across full stack including K8s; AI-directed RCA in Observability Cloud and AppDynamics; 1-click incident management target | SOAR playbook authoring; AI Playbook Authoring (natural language → SOAR playbooks); Webex war-room auto-creation; MCP server integration; remediation recommendations |

GA Troubleshooting Agent (Q1 2026) Preview Triage Agent & Playbook Authoring |

|

Dynatrace

Dynatrace Intelligence

|

Davis AI Davis CoPilot SRE Agent Developer Agent Security Agent | Deterministic causal AI maps billions of dependencies via Smartscape topology; pinpoints exact root cause without hallucination; natural language RCA summaries; log "explain" AI; 90% MTTI reduction reported by customers | Agentic K8s remediation; workflow automation; ServiceNow integration; GitHub Copilot coding agent for vulnerability remediation; MCP server; self-healing system target; supervised autonomy model |

GA Davis AI / CoPilot Preview SRE / Dev / Sec Agents (Perform 2026) |

|

Datadog

Bits AI

|

Bits AI SRE Bits AI Dev Agent Bits AI Security Analyst | Always-on autonomous SRE; investigates alerts before engineer opens laptop; multi-hypothesis parallel testing (validated / invalidated / inconclusive); learns from investigations via memory; 70% MTTR reduction reported | 7 in-loop triage actions (Slack, Teams, Jira, PagerDuty, incident creation); Dev Agent auto-generates PRs with code fixes from observability data; Security Analyst triages SIEM signals autonomously; human-in-loop approvals retained |

GA Bits AI SRE (Dec 2025) Beta Dev Agent / Security Analyst |

|

Elastic

Elastic AI / Workflows

|

AI Assistant Attack Discovery Elastic Workflows Agent Builder Auto Migration | AI Assistant interprets logs, traces, errors, and runbooks in context; Attack Discovery triages alerts and maps to MITRE ATT&CK; ML-based log anomaly detection and grouping; inline AI surfaces RCA without requiring a chat session | Elastic Workflows (native automation engine): rules-based + agent-driven steps; codifies repeatable SOC triage; agents handle novel/unknown scenarios dynamically; Jira/PagerDuty/Slack connectors; SIEM migration from Splunk/QRadar |

GA AI Assistant + Agent Builder Preview Elastic Workflows (Feb 2026) |

|

Palo Alto Networks

Cortex XSIAM + AgentiX

|

Cortex XSIAM Cortex AgentiX Chronosphere Unit 42 Intel | ML-driven alert aggregation and stitching into incidents; automated triage at machine speed; trained on 1.2B playbook executions; causal correlation across EDR, XDR, SIEM, SOAR, CSPM; 87% alert volume reduction reported | AgentiX: prebuilt agents plan, reason and execute autonomously; 98% MTTR reduction / 75% less manual work claimed; SOAR playbook automation; Chronosphere telemetry pipeline filters noise (30%+ volume reduction); $1B+ cumulative XSIAM bookings |

GA XSIAM + AgentiX in Cortex Cloud Integrating Chronosphere (Jan 2026) |

|

New Relic

Intelligent Observability

|

SRE Agent New Relic AI Agentic Platform iRCA MCP Server Logs Intelligence | SRE Agent: next-gen triage, RCA, incident lifecycle management; Intelligent RCA uses topology + probabilistic models; AI log alert summarisation auto-extracts error patterns; MCP server feeds observability context to any external agent | No-code Agentic Platform: visual drag-and-drop agent builder for SREs; Workflow Automation (GA); integrates with ServiceNow, Gemini Code Assist, GitHub, Slack, Zoom; partner-led CI/CD remediation model |

GA New Relic AI + Workflow Automation Preview SRE Agent + Agentic Platform (Feb 2026) |

Data summarized by Claude on Apr 20,2026Disclaimer: AI can make mistakes, for deep dive please doublecheck the answers on relevant sources.AutoOps for Elastic Cluster

Elastic has announced on Feb 25 that Elastic AutoOps is now free for all.

https://www.elastic.co/blog/autoops-free

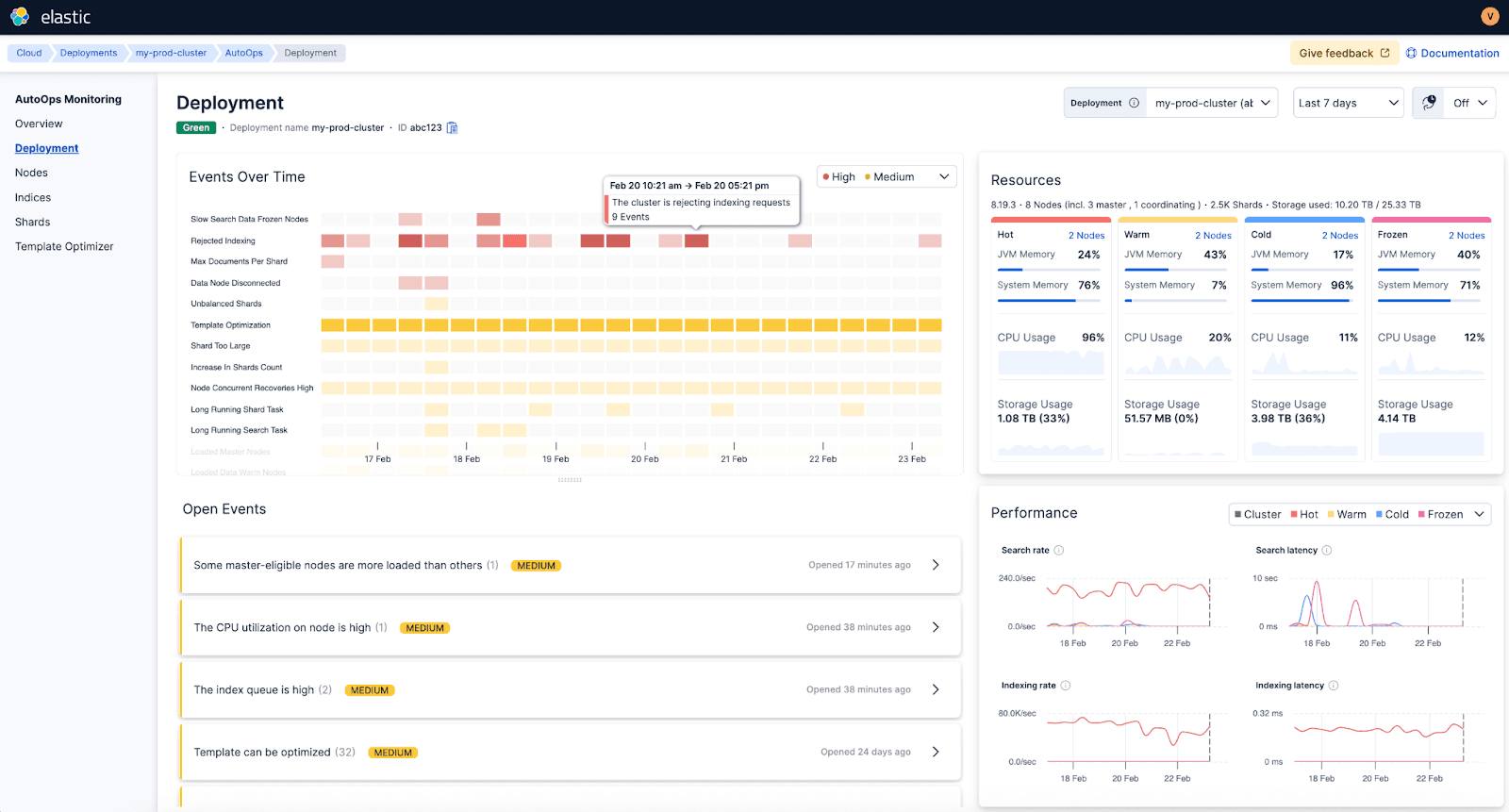

Elastic AutoOps is a SaaS service Elastic provides that helps you to gain critical insight for your cluster operation. It collects the operational metadata (Node stats, cluster settings and shard states etc) and ship to AutoOps Service on Elastic Cloud for analytics and operational dashboards.

What it means for Elastic users and administrator teams?

Elastic has announced on Feb 25 that Elastic AutoOps is now free for all.

https://www.elastic.co/search-labs/blog/elastic-autoops-free-for-self-managed-elasticsearch

Elastic AutoOps is a SaaS service Elastic provides that helps you to gain critical insight for your cluster operation. It collects the operational metadata (Node stats, cluster settings and shard states etc) and ship to AutoOps Service on Elastic Cloud for analytics and operational dashboards.

This means that Elastic Cloud provides a free cloud service to monitor your cluster. (It does not collect your payload data in the cluster, only meta data for cluster operations)

For those that utilize this free service, it could reduce the operational overhead for managing ELK cluster significantly as Elastic provides best practice AI driven monitoring framework of your cluster, it also provides you recommendations for mitigations.

A screen shot from the Elastic AutoOps intro.

Well, we all know from our life experiences that "free" product and services if often not so "free" in the other aspects. This is a service that costs computing power and maintenance, but I guess this provides Elastic as a product vendor the critical insight of how customers are using their products and key insights of what is right and what is wrong with the product implementation out in the field. Of course this insight is worth a lot for product development and commercial reasons.

For users and administrators of ELK cluster, the pros and cons are obvious:

Pros:

No need to reinvent the wheel and build up up-to-date routines, tooling setups for monitoring of the cluster when the best-in-class tool is free to use

Reduce lead time for troubleshooting significantly with the support of AI engines online at Elastic Cloud

No longer rely on key person or competence to manage the cluster operation on a day-to-day basis

Developers, SRE and IT security analysts that are heavy users of the ELK stack will be able to have a real time view of how the cluster is working in real-time if they hit any performance issue or need to troubleshoot

Cons:

You need to submit the cluster metadata to Elastic Cloud through the AutoOps agent

Monitoring of the cluster becomes more of a black-box and you just consume the data (it may not be a con as end-users are more interested of the outcome from Elastic solutions than the cluster itself)

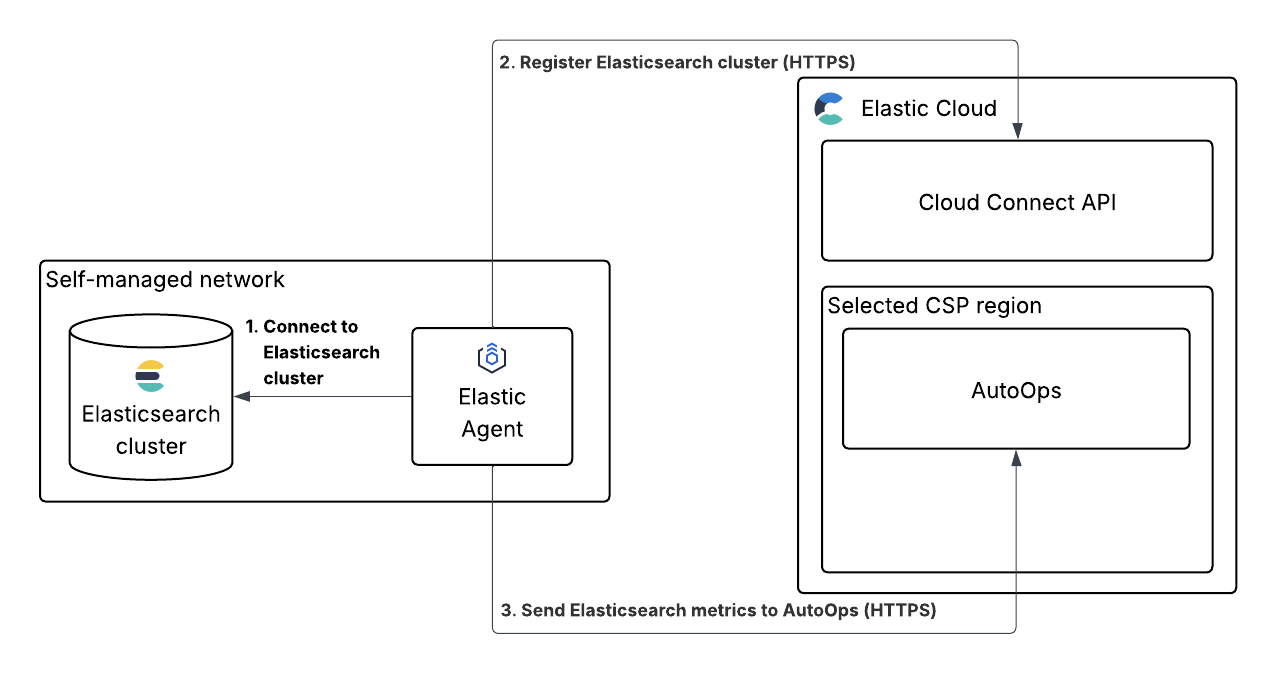

How does this AutoOps agent work? (source Elastic doc)

Impact of this for organizations and teams using Elastic stack for search, observability and security:

You probably no longer need as many ELK cluster operational resources as ealier when this monitoring was sole an in-house action

Users of Elastic stack will have transparency of how the cluster is working right now, which significantly reduce their troubleshooting time when they hit issues

It becomes easier to further develop and expand the cluster as shortcomings of the current environment becomes much clear through the insightful data in AutoOps

The question we need to ask is what we will do when this feature will be charged, the cost of savings in reduced number of human monitoring resources may justify a price tag

Before the cloud age, vendors would probably sell a tool or function like AutoOps as an additional feature with license fee. Now Elastic chooses to provide this as a free service in the Cloud. For smaller organizations, it is a no brainer and many probably is already running on serverless. For others this provides an opportunity to move the cost from maintenance resources to more AI driven automation and operation in future. This is happening anyway with the rapid expansion of Agentic AI.

Obserability in the Agentic AI era

Langchain published on Feb 21:st a very insightful and structured paper about Agent observability in the Agentic AI era which is taking the industry with storm.

https://blog.langchain.com/agent-observability-powers-agent-evaluation/

If we think it is a challenge migrating monitoring to observability for the microservices and kubernetes containers, degree of difficulties and challenge grow hundred times in the Agentic AI due to the following changed behavior of software:

Testing and verification appears only at run-time, traditional tests are obsolete

Number of code lines to trace and debug grow to astronomical level

Indeterministic nature of the LLM reasoning outcome

The interaction model between the AI agents

Langchain published on Feb 21:st a very insightful and structured paper about Agent observability in the Agentic AI era which is taking the industry with storm.

https://blog.langchain.com/agent-observability-powers-agent-evaluation/

Here are some short summaries of the content:

New challenges compare with traditional software debugging

From debugging code to debugging reasoning

Change of testing methodology for software when agent behavior emerges only at runtime

Major observability components like runs, traces and threads in agent calls

Growth of tracing data will be gigantic for debugging purposes

Mitigations:

Single-step evaluation

Full-turn evaluation

Multi-turn evaluation

Other evaluation concepts

Offline evaluation

Online evaluation

Ad-hoc evaluation

An example of troubleshooting workflow for Agents:

User reports incorrect behavior

Find the production trace

Extract the state at the failure point

Create a test case from that exact state

Fix and validate

On the blog page there are as well a number of case studies of using Langsmith for Agent Observability troubleshooting. As this is so new and fresh, most of the tooling vendors are yet catching up frenetically in this area.

https://blog.langchain.com/tag/case-studies/

I asked Claude to provide me with a summary of the AI agent observability field, and below are the summary table that Claude has provided based on the evaluation concepts that Langchain provided in the blogpost.

My take away:

If we think it is a challenge migrating monitoring to observability for the microservices and kubernetes containers, degree of difficulties and challenge grow hundred times in the Agentic AI due to the following changed behavior of software:

Testing and verification appears only at run-time, traditional tests are obsolete

Number of code lines to trace and debug grow to astronomical level

Non-deterministic nature of the LLM reasoning outcome

The interaction model between the AI agents

We are at the dawn of a new era with a lot of doors of opportunity open for innovation and new technology. Thanks to the fact that we have smarter AI LLM and tools now, those observability challenges with huge datasets and iterative testing cycles is just what AI is good at.

Vendor Capability Comparison for Agent Observability

Summarized by Claude

The three IT observability incumbents (Dynatrace, Elastic, Splunk/Cisco) are all moving fast, but their approaches reflect their heritage.

The notable difference vs. purpose-built tools like LangSmith and Arize: the incumbents excel at correlating agent behavior with the full application/infrastructure stack, but LangSmith remains the only platform where Runs, Traces, and Threads are truly first-class primitives — particularly for building evaluation datasets directly from production traces, which is the most critical workflow the blog post describes.

Agent Observability: Vendor Capability Comparison

Mapping IT observability vendor solutions to the LangChain framework for agent observability — Runs · Traces · Threads · Evaluation

| Observability Area (LangChain Framework) |

🔵 Dynatrace Grail + Davis AI + DT Intelligence |

🟡 Elastic Elastic Observability + EDOT |

🟠 Splunk / Cisco Observability Cloud + AppDynamics |

🟣 Datadog LLM Observability |

🟢 New Relic AI Monitoring |

🔴 LangSmith (LangChain) — Purpose-built |

⚪ Arize AI Phoenix + AX |

|---|---|---|---|---|---|---|---|

| PRIMITIVE 1: RUNS — Capturing individual LLM execution steps (inputs, outputs, tool choices at each step) | |||||||

| Single LLM Call Tracing Input/output capture per call |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Tool Call Visibility Which tools the agent invoked, with what arguments |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| Cost & Token Monitoring Token usage, cost-per-request tracking |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| PRIMITIVE 2: TRACES — Capturing full agent execution trajectories (all steps, tool calls, nested structure) | |||||||

| End-to-End Agent Trace Multi-step trajectory from input to final output |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Topology & Dependency Mapping How agents, tools, and services relate to each other |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| RAG / Retrieval Observability Vector DB, retrieval quality, context grounding |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Guardrails & Safety Monitoring Content filtering, prompt injection, policy compliance |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| PRIMITIVE 3: THREADS — Multi-turn conversation context across sessions (state evolution, context accumulation) | |||||||

| Multi-Turn Session Tracking Grouping traces into conversational threads |

GA

|

GA

|

Preview (Alpha)

|

GA

|

Preview

|

GA

|

GA

|

| State & Memory Tracking How agent memory and artifacts change across turns |

GA

|

Preview

|

Preview

|

Preview

|

Roadmap

|

GA

|

GA

|

| EVALUATION — Assessing agent quality: single-step, full-turn, multi-turn; offline, online, and ad-hoc | |||||||

| Single-Step Evaluation Did the agent make the right decision at a specific step? |

GA

|

GA

|

Preview

|

GA

|

Preview

|

GA

|

GA

|

| Full-Turn (Trajectory) Evaluation Did the agent execute the full task correctly end-to-end? |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Multi-Turn Evaluation Does the agent maintain context correctly over a full session? |

Preview

|

Preview

|

Preview (Alpha)

|

Preview

|

Roadmap

|

GA

|

GA

|

| Online (Production) Evaluation Continuous quality checks on live agent traffic |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Offline Evaluation / Datasets Building test suites from production traces; pre-deployment testing |

GA

|

Preview

|

Preview (Alpha)

|

GA

|

Roadmap

|

GA

|

GA

|

| Ad-Hoc Insights / AI-Assisted Analysis Querying traces at scale; pattern discovery; LLM-as-judge |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| PLATFORM DIFFERENTIATORS — OTel alignment, framework support, unique strengths | |||||||

| OpenTelemetry & Framework Support | GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Key Differentiator / Unique Strength | 🔵 Causal AI + Deterministic Agents: Davis AI provides causal root cause analysis grounded in real-time Smartscape topology. Dynatrace Intelligence fuses deterministic + agentic AI for trusted autonomous operations. 12x better problem resolution vs. pure LLM agents. | 🟡 Search + Observability + Security Unified: Elastic combines LLM observability, security (SIEM), and search in one platform. Strong OTel ecosystem via EDOT. Leader in 2025 Gartner Magic Quadrant for Observability Platforms. | 🟠 Cisco AI Defense + AGNTCY Standards: Unique network/security heritage via Cisco integration enables AI risk detection at infrastructure level. Strong OpenTelemetry contribution and vendor-neutral AGNTCY standard for agent quality metrics. | 🟣 Breadth + APM Correlation: LLM traces integrated directly alongside existing APM, infra, and security data. LLM Experiments allows prompt testing pre-deployment. Watchdog AI for continuous anomaly detection. Google ADK first-mover integration. | 🟢 Application-Centric Depth + Pricing: Strong APM heritage with code-level diagnostics. Predictable data-ingestion pricing. SRE Agent integrates with ServiceNow, PagerDuty, GitHub for agentic remediation. 30% QoQ growth in AI Monitoring adoption. | 🔴 Purpose-Built for Agent Evaluation: Only vendor where Runs, Traces, and Threads are first-class primitives. Production traces automatically become offline test datasets. Deepest LangChain/LangGraph integration. Insights Agent for AI-assisted trace analysis at scale. | ⚪ ML Pedigree + Open Source: Only vendor with traditional ML model monitoring (drift, bias) converging with LLM agent observability. Arize Phoenix is open-source and OTel-native. Strong RAG evaluation with TruLens. Best embedding-level drift detection. |

Sources: LangChain Blog (Feb 2026), Dynatrace Docs & Blog (Jan–Feb 2026), Elastic Docs & Observability Labs (2025–2026), Splunk Blog & Docs (Q1 2026), Datadog, New Relic, Arize AI product documentation. Status as of February 2026. Features evolving rapidly — verify current availability with vendors.

Data summarized by Claude on Feb 26,2026

Disclaimer: AI can make mistakes, for deep dive please doublecheck the answers on relevant sources.